The gap between proprietary and open source AI models for coding is narrowing fast. A year ago, self-hosting an LLM for development meant settling for significantly worse performance than cloud-based alternatives like GPT-4 or Claude. In 2026, the best open source models are closing in on proprietary leaders across benchmarks like

LiveBench, and some even outperform them on specific tasks like code generation and completion.

Whether you’re a solo developer who wants to keep code off third-party servers, a startup looking to cut API costs, or an enterprise with strict data compliance requirements, self-hosted open source LLMs have become a genuinely viable option for professional software development. In this guide, we’ll cover the best open source models you can self-host for coding, the tools to deploy them, and the hardware you need to get started.

Summary

Top Open Source LLMs for Coding (Self-Hostable):

- Kimi K2.6 Thinking - LiveBench Coding 78.57, Agentic Coding 58.33 - Get Kimi K2.6

- GLM 5.1 - LiveBench Coding 75.37, Agentic Coding 55.00 - Get GLM-5.1

- DeepSeek V4 Pro - LiveBench Coding 69.99, Agentic Coding 56.67 - Get DeepSeek-V4-Pro

- DeepSeek V3.2 - LiveBench Coding 75.69, Agentic Coding 46.67 - Get DeepSeek V3.2

- Qwen 3.6 27B - LiveBench Coding 71.78, Agentic Coding 50.00 - Get Qwen 3.6 27B

- MiniMax M2.5 - LiveBench Coding 70.70, Agentic Coding 51.67 - Get MiniMax M2.5

- Devstral 2 - LiveBench Coding 66.79, Agentic Coding 43.33 - Get Devstral 2

Best Self-Hosting Tools:

- Ollama - Easiest way to get started locally

- vLLM - Best for production serving

- LM Studio - Best GUI for desktop users

Open Source vs Proprietary: How Close Is the Gap?

Before diving into individual models, it’s worth understanding where open source stands relative to proprietary options.

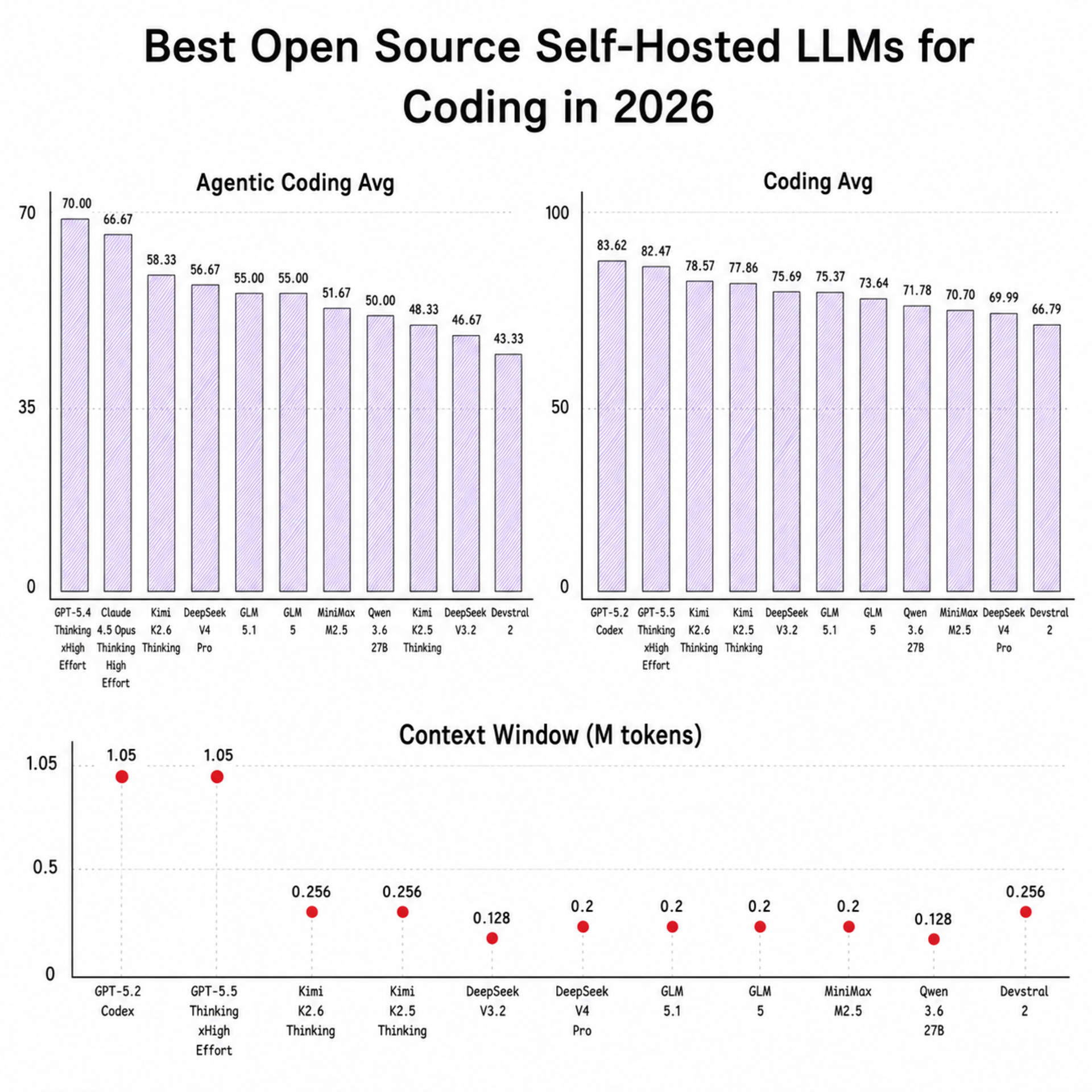

LiveBench is contamination-aware and tracks coding, agentic coding, reasoning, math, data analysis, language, and instruction following. The snapshot below is updated to May 12, 2026 using the latest scores.

LiveBench Agentic Coding Average (May 12, 2026 Snapshot)

| Model | Organization | Type | Agentic Coding Avg |

|---|

| GPT-5.4 Thinking xHigh Effort | OpenAI | Proprietary | 70.00 |

| GPT-5.3 Codex xHigh | OpenAI | Proprietary | 66.67 |

| Kimi K2.6 Thinking | Moonshot AI | Open Source | 58.33 |

| DeepSeek V4 Pro | DeepSeek | Open Source | 56.67 |

| GLM 5.1 | Z.AI | Open Source | 55.00 |

| GLM 5 | Z.AI | Open Source | 55.00 |

| MiniMax M2.5 | MiniMax | Open Source | 51.67 |

| Qwen 3.6 27B | Alibaba | Open Source | 50.00 |

| Kimi K2.5 Thinking | Moonshot AI | Open Source | 48.33 |

| DeepSeek V3.2 | DeepSeek | Open Source | 46.67 |

| Devstral 2 | Mistral | Open Source | 43.33 |

LiveBench Coding Average (May 12, 2026 Snapshot)

| Model | Organization | Type | Coding Avg |

|---|

| GPT-5.2 Codex | OpenAI | Proprietary | 83.62 |

| GPT-5.5 Thinking xHigh Effort | OpenAI | Proprietary | 82.47 |

| Kimi K2.6 Thinking | Moonshot AI | Open Source | 78.57 |

| Kimi K2.5 Thinking | Moonshot AI | Open Source | 77.86 |

| DeepSeek V3.2 | DeepSeek | Open Source | 75.69 |

| GLM 5.1 | Z.AI | Open Source | 75.37 |

| GLM 5 | Z.AI | Open Source | 73.64 |

| Qwen 3.6 27B | Alibaba | Open Source | 71.78 |

| MiniMax M2.5 | MiniMax | Open Source | 70.70 |

| DeepSeek V4 Pro | DeepSeek | Open Source | 69.99 |

| Devstral 2 | Mistral | Open Source | 66.79 |

On this May 12, 2026 snapshot, Kimi K2.6 Thinking is the strongest open-source entry across both key coding metrics in this guide: 78.57 Coding Avg and 58.33 Agentic Coding Avg. DeepSeek V4 Pro and GLM 5.1 remain close on agentic coding, while DeepSeek V3.2 still holds a strong coding score.

For the latest scores and full model list, visit the

LiveBench leaderboard directly.

Best Open Source LLMs for Coding

New in April-May 2026: GLM-5.1, DeepSeek-V4, Qwen3.6, and Kimi K2.6

Four major open-weight coding releases landed after this guide was originally published.

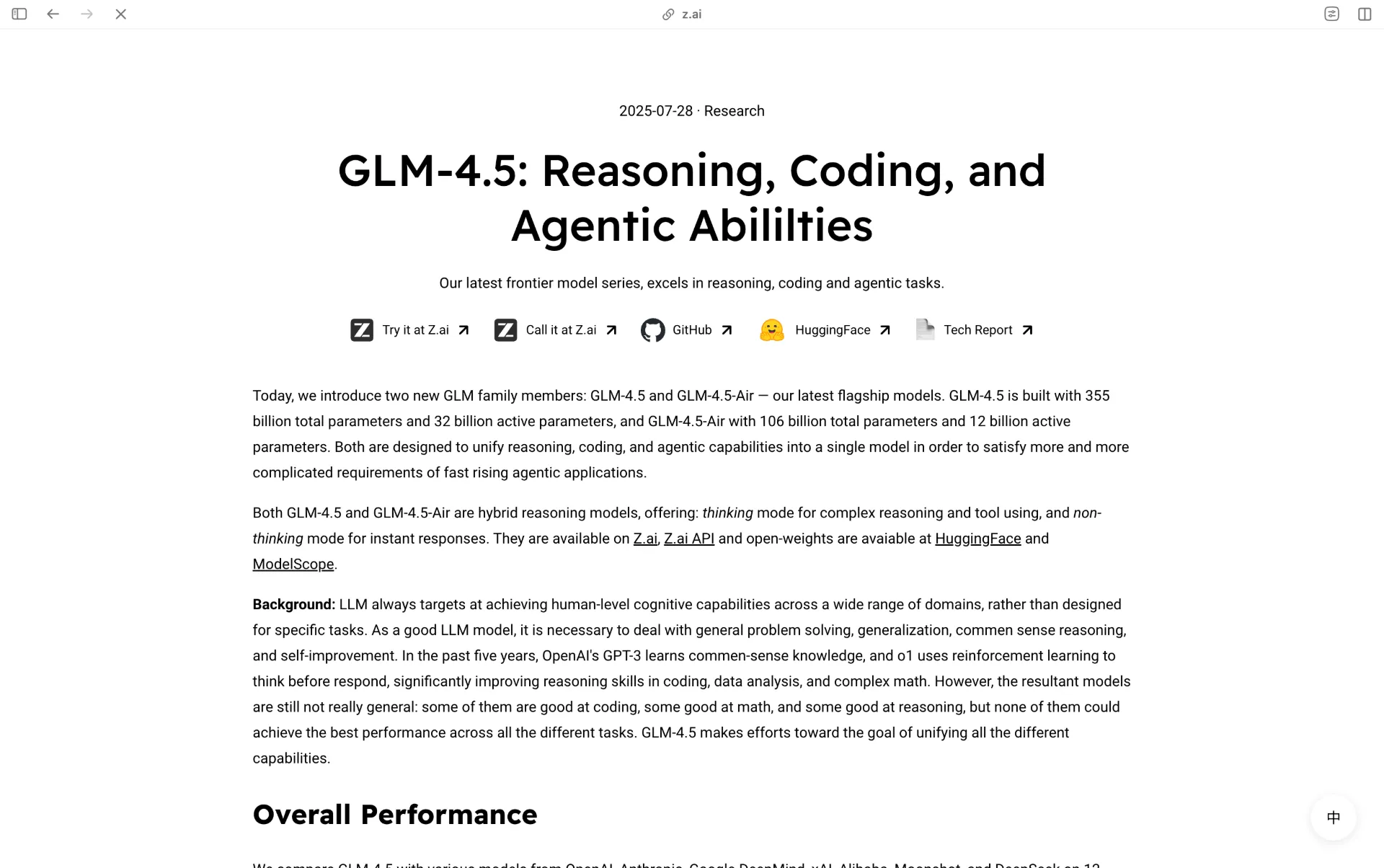

GLM-5.1 (Z.AI)

GLM-5.1 is Z.AI’s newer flagship open-weight coding model. Official documentation highlights 58.4 on SWE-Bench Pro (self-reported), up to 8-hour long-horizon execution, and a 200K context window with MIT licensing. It is also documented for local serving through vLLM and SGLang.

DeepSeek-V4 (DeepSeek)

DeepSeek lists

DeepSeek-V4 release date as April 24, 2026. The official model cards on Hugging Face expose both variants:

V4-Pro (1.6T total / 49B active) and

V4-Flash (284B total / 13B active), both with 1M context and MIT licensing. On the May 12, 2026 LiveBench snapshot, DeepSeek V4 Pro scores 69.99 (Coding Avg) and 56.67 (Agentic Coding Avg).

Qwen3.6 (Qwen Team / Alibaba)

The official

Qwen3.6 repository reports April 2026 open-weight drops for Qwen3.6-35B-A3B and Qwen3.6-27B. On the May 12, 2026 LiveBench snapshot, Qwen 3.6 27B scores 71.78 (Coding Avg) and 50.00 (Agentic Coding Avg). Qwen also reports strong model-card benchmark results for 35B-A3B, including 49.5 SWE-Bench Pro and 51.5 Terminal-Bench 2.0 (self-reported), with Apache 2.0 licensing.

Kimi K2.6 (Moonshot AI)

Moonshot lists Kimi K2.6 in its latest research feed on April 20, 2026, and the official model card reports major coding-agent gains over K2.5. A full profile is included below in section #2.

1. GLM-5 / GLM-5.1 (Zhipu AI) - Top-Tier Agentic Baseline

GLM-5 and

GLM-5.1 remain strong open-source options for long-context coding and agentic tasks. On the May 12, 2026 LiveBench snapshot, both score 55.00 on Agentic Coding, while Coding Average is 73.64 (GLM-5) and 75.37 (GLM-5.1). The family uses a large MoE architecture with 200K context support.

What makes GLM-5 particularly noteworthy is its training infrastructure. The entire model was trained on 100,000 Huawei Ascend 910B chips rather than NVIDIA GPUs, making it a significant milestone for non-NVIDIA AI hardware. Zhipu also introduced a novel reinforcement learning infrastructure called “Slime” that reduced hallucination rates from 90% to 34%.

On coding benchmarks, GLM-5 scores 90% on HumanEval and 77.8% on SWE-bench Verified according to Zhipu AI’s official reports. Its 200K context window is generous enough to handle analysis of large codebases in a single session.

Key Specs

- Architecture: MoE, 744B total / 40B active parameters

- Context Window: 200K tokens

- License: MIT (free commercial use)

- LiveBench Agentic Coding Avg: 55.00 (GLM-5 and GLM-5.1)

- LiveBench Coding Avg: 73.64 (GLM-5), 75.37 (GLM-5.1)

- SWE-bench Verified: 77.8% (self-reported by Zhipu AI)

- HumanEval: 90%

- Self-hosting: Supported via vLLM and SGLang; weights available on Hugging Face and ModelScope

2. Kimi K2.6 (Moonshot AI) - Current Open-Source Leader on LiveBench Coding + Agentic

Kimi K2.6 is Moonshot AI’s newest open-weight coding model line, listed on Moonshot’s

latest research timeline on April 20, 2026. On the May 12, 2026 LiveBench snapshot, Kimi K2.6 Thinking leads the open-source models listed in this guide with 78.57 Coding Avg and 58.33 Agentic Coding Avg.

Moonshot’s

technical write-up for K2.6 describes a larger agent-swarm setup, with up to 300 sub-agents and around 4,000 coordinated reasoning/execution steps for complex workflows. In practice, this is aimed at repo-level tasks where planning, tool use, and verification have to run over long trajectories.

On the official model card, Moonshot reports K2.6 scores of 58.6 (SWE-Bench Pro), 80.2 (SWE-Bench Verified), 66.7 (Terminal-Bench 2.0), and 89.6 (LiveCodeBench v6). These are vendor-reported numbers, but they position K2.6 as one of the strongest open-weight coding options currently available.

Key Specs

- Architecture: MoE, ~1T total / 32B active parameters

- Context Window: 256K tokens

- License: Modified MIT (commercial use allowed)

- SWE-Bench Pro: 58.6 (self-reported by Moonshot AI)

- SWE-Bench Verified: 80.2 (self-reported by Moonshot AI)

- Terminal-Bench 2.0: 66.7 (self-reported by Moonshot AI)

- LiveCodeBench v6: 89.6 (self-reported by Moonshot AI)

- LiveBench Coding Avg (May 12, 2026): 78.57

- LiveBench Agentic Coding Avg (May 12, 2026): 58.33

- Self-hosting: Recommended via vLLM or SGLang; production requires 2x H100 80GB or 4x A100 80GB, 512GB RAM

3. DeepSeek V3.2 - Coding 75.69, Agentic 46.67

DeepSeek has consistently pushed the boundaries of what open source models can achieve for coding. The V3.2 release scores 75.69 on LiveBench Coding Average and 46.67 on Agentic Coding, placing it among the top open source contenders. It features 671 billion total parameters with 37 billion active, using a Mixture of Experts architecture and a 160K context window. It’s released under the MIT license.

DeepSeek’s lineage in code-specific models runs deep. The original DeepSeek Coder series (1B to 33B) was trained on 2 trillion tokens composed of 87% code and 13% natural language. DeepSeek Coder V2 expanded to support 338 programming languages. V3.2 combines these strengths into a general model that excels at coding, scoring 73.1% on SWE-bench Verified.

The model’s API pricing is remarkably low at roughly $0.27 to $0.55 per million tokens, making it one of the most cost-effective options even before considering self-hosting. For local deployment, the smaller DeepSeek Coder models (6.7B) run comfortably on consumer hardware through Ollama or LM Studio, while the full V3.2 requires enterprise-grade infrastructure.

Key Specs

- Architecture: MoE, 671B total / 37B active parameters

- Context Window: 160K tokens

- License: MIT

- LiveBench Coding Avg: 75.69

- LiveBench Agentic Coding Avg: 46.67

- SWE-bench Verified: 73.1% (self-reported by DeepSeek)

- Self-hosting: Full model requires multi-GPU setup; smaller Coder variants run on consumer GPUs via Ollama

4. Devstral 2 (Mistral AI) - Coding 66.79, Agentic 43.33

Devstral 2 from Mistral AI is a 123 billion parameter model specifically designed for agentic software engineering. It scores 66.79 on LiveBench Coding Average and 43.33 on Agentic Coding. Released in December 2025, it scores 72.2% on SWE-bench Verified with a 256K context window, making it one of the most capable code-focused models available. Mistral describes it as 7x more cost-efficient than Claude Sonnet and 5x smaller than DeepSeek V3.2 while remaining competitive in benchmarks.

What makes the Devstral family compelling for self-hosting is the smaller sibling, Devstral Small 2 (24B parameters), which scores an impressive 68% on SWE-bench Verified. That’s remarkable for a model that runs on a single RTX 4090 or a Mac with 32GB of RAM. It also supports image inputs and comes with Apache 2.0 licensing, making it one of the most permissive options available.

Mistral also offers Vibe CLI, an open source terminal coding assistant powered by Devstral, giving you a ready-made development workflow out of the box.

Key Specs (Devstral 2)

- Parameters: 123B

- Context Window: 256K tokens

- License: Modified MIT

- LiveBench Coding Avg: 66.79

- LiveBench Agentic Coding Avg: 43.33

- SWE-bench Verified: 72.2% (self-reported by Mistral AI)

- Self-hosting: Multi-GPU recommended for full model

Key Specs (Devstral Small 2)

- Parameters: 24B

- Context Window: 128K tokens

- License: Apache 2.0

- SWE-bench Verified: 68.0% (self-reported by Mistral AI)

- Self-hosting: Single RTX 4090 or Mac with 32GB RAM

The

Qwen3-Coder family from Alibaba represents one of the most comprehensive open source coding model lineups available. The flagship model features 480 billion parameters with a Mixture of Experts design, and Alibaba describes it as “our most agentic code model to date.” There’s also a smaller 30B variant (3B active) for resource-constrained environments.

The more recent Qwen3-Coder-Next (80B total, 3B active) pushes the envelope further with hybrid attention combined with MoE, trained with large-scale reinforcement learning specifically for agentic tasks. It scores 70.6% on SWE-bench Verified, an impressive result for a model with only 3B active parameters.

Alibaba also provides

Qwen Code, an open source terminal coding agent optimized for Qwen3-Coder models. This gives developers a Claude Code or Aider-like experience powered entirely by open source infrastructure.

The broader Qwen ecosystem also includes Qwen 2.5 Coder (available in sizes from 0.5B to 32B), which remains one of the best mid-range options. The 32B Instruct variant scores 73.7 on the Aider benchmark (comparable to GPT-4o) and is readily available through Ollama.

Key Specs

- Architecture: MoE, up to 480B total parameters

- License: Apache 2.0

- SWE-bench Verified: 70.6% (Qwen3-Coder-Next, self-reported by Alibaba)

- Self-hosting: Qwen 2.5 Coder 32B runs on consumer hardware via Ollama; larger variants require multi-GPU

6. Llama 4 (Meta) - Largest Context Window (10M)

Llama 4 from Meta continues to be the most widely deployed open source model family, with over 650 million total downloads and roughly 9% of enterprise production workloads running on Llama variants. The Llama 4 family released in April 2025 includes Scout (109B total, 17B active, 10M context window), Maverick (400B total, 17B active, 1M context), and the announced but unreleased Behemoth (~2T total, 288B active).

While Llama 4 isn’t specifically a coding model, its massive context windows and multimodal capabilities (text and image input across 12 languages) make it highly versatile for development workflows. The code-specific Llama 4 Coder variant brings improved code generation, debugging, and completion accuracy.

The main caveat is licensing: Llama’s license does not meet the OSI Open Source Definition and includes restrictions for companies with very large user bases. For most developers and smaller organizations, this is a non-issue, but it’s worth noting compared to the MIT or Apache 2.0 licenses of other models on this list.

Key Specs

- Architecture: MoE, up to 400B total / 17B active (Maverick)

- Context Window: Up to 10M tokens (Scout)

- License: Llama Community License (restrictions for very large companies)

- Self-hosting: Scout and Maverick available via Ollama, vLLM; smaller variants run on consumer hardware

7. StarCoder 2 (BigCode / Hugging Face) - Most Auditable Training Data

StarCoder 2 is a collaboration between Hugging Face and ServiceNow under the BigCode project. Available in 3B, 7B, and 15B sizes, it was trained on 3.3 to 4.3 trillion tokens from The Stack v2, covering 619 programming languages. It uses Grouped Query Attention with a 16K context window.

StarCoder 2’s standout quality is its data transparency. Every training data source is documented with Software Heritage Identifiers (SWHIDs), making it the most auditable coding model available. This matters for enterprises concerned about IP and licensing compliance. The 15B model matches or outperforms CodeLlama 34B (a model twice its size), demonstrating strong efficiency.

While it doesn’t compete with the larger MoE models on raw benchmarks, StarCoder 2 remains an excellent choice for teams that need a lightweight, well-documented coding model they can run on modest hardware.

Key Specs

- Sizes: 3B, 7B, 15B

- Context Window: 16K tokens

- License: OpenRAIL (fully transparent training data)

- Self-hosting: Runs on consumer hardware via Ollama; 3B variant works on laptops

Honorable Mentions

Several other open source models deserve recognition for specific strengths:

- IBM Granite Code - Available from 350M to 34B parameters under Apache 2.0, trained on 116 programming languages with license-permissible data. Granite 4.0 introduces hybrid Mamba-2/transformer architecture using 70% less memory. Best choice for enterprise compliance.

- NVIDIA Nemotron-Cascade 2 - A 30B MoE with only 3B active parameters that achieves Gold Medal-level performance on competitive programming benchmarks (IMO, IOI, ICPC) with 20x fewer parameters than comparable models. Remarkable efficiency.

- Yi-Coder - From 01.AI, available in 1.5B and 9B sizes with 128K context and Apache 2.0 license. Yi-Coder 9B scores 85.4% on HumanEval, on par with DeepSeek Coder 33B at a fraction of the size.

- Qwen 3.5 - Released February 2026 with a 397B MoE model, featuring unified vision-language capabilities and support for 201 languages. One of the top-ranked open-weight models across multiple benchmarks.

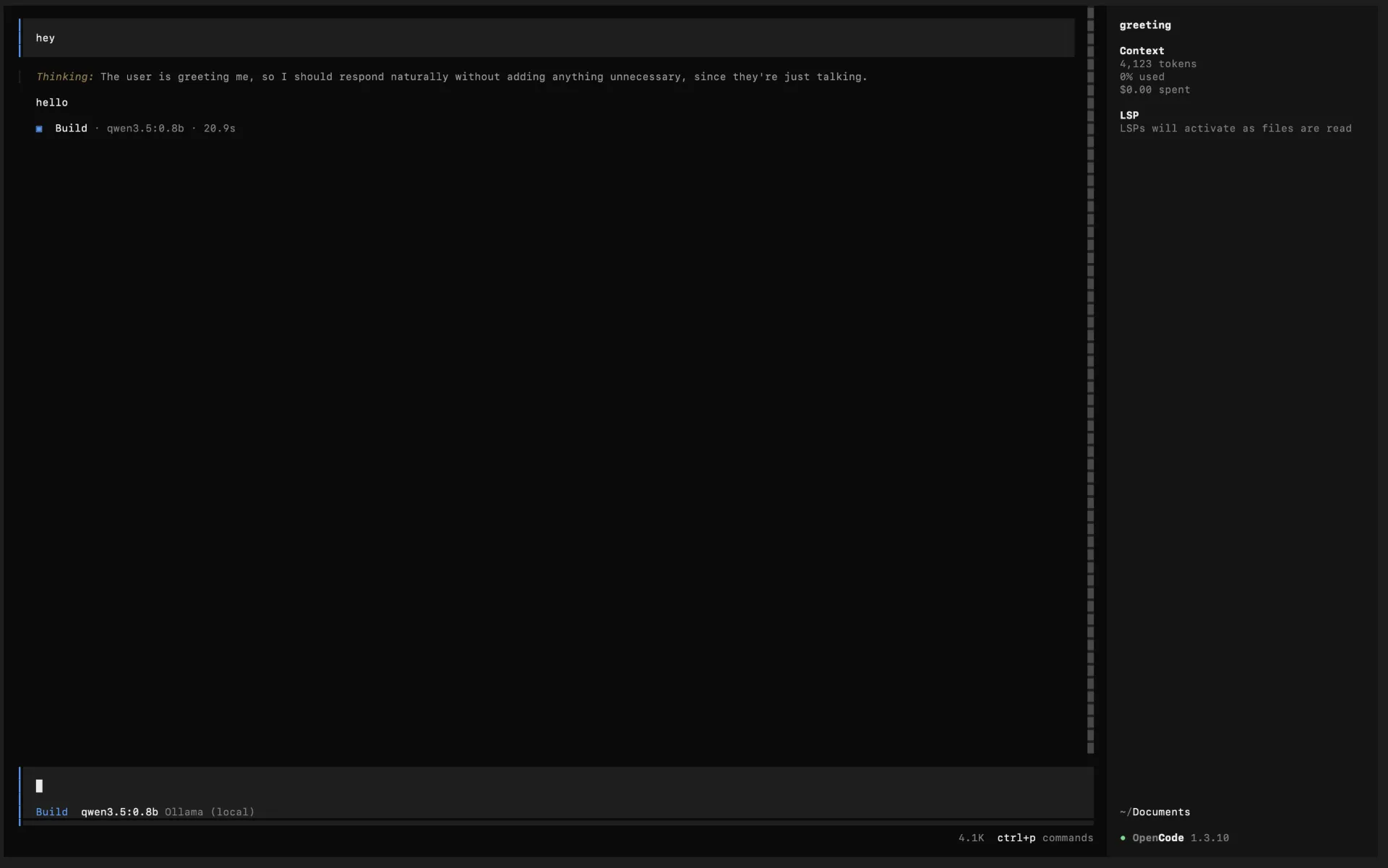

How to Use These Models with a Coding Agent

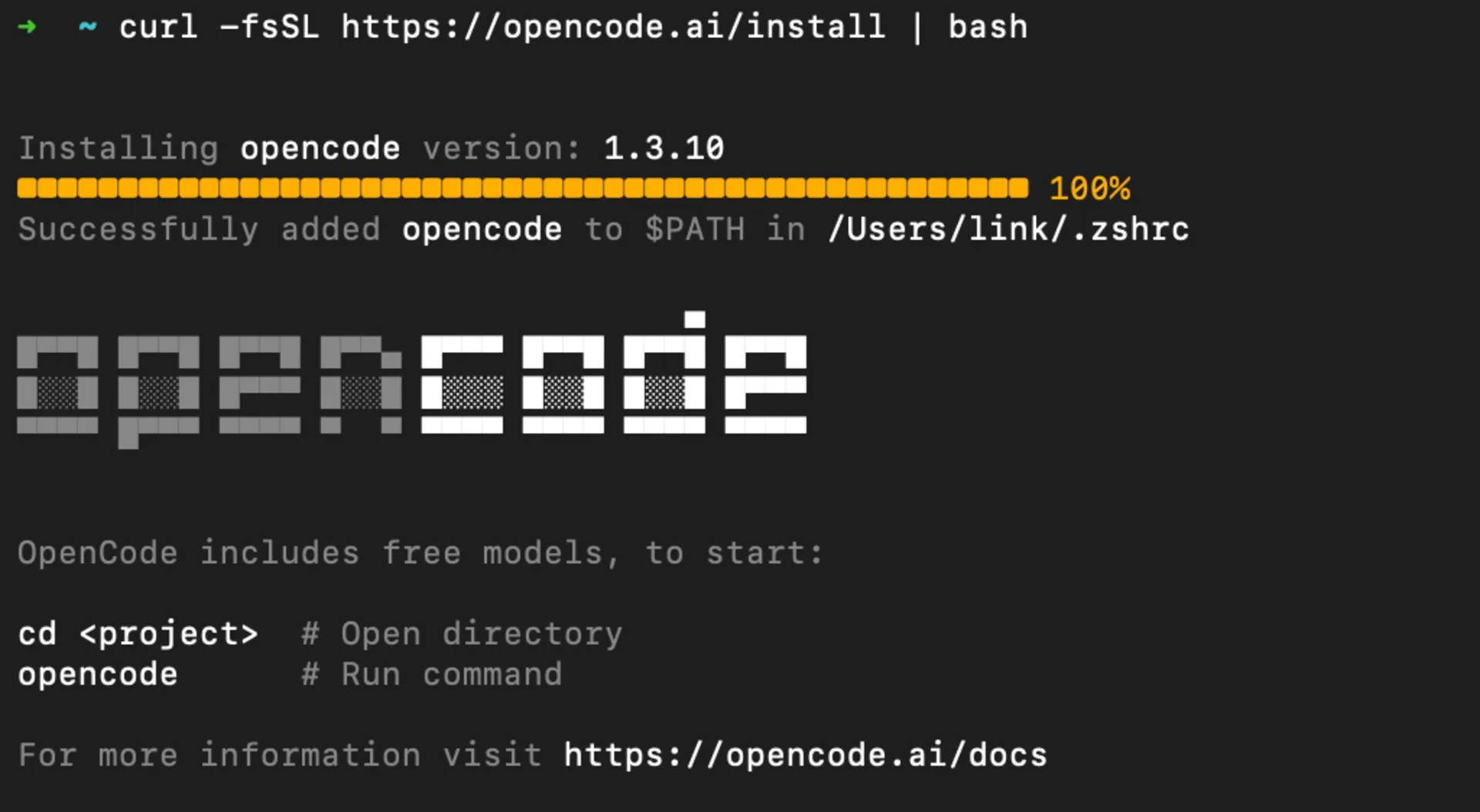

If you want a Claude Code or Aider-style workflow with self-hosted models, one of the easiest setups is OpenCode +

Ollama. This combination gives you a local coding agent with a simple terminal workflow and no cloud dependency.

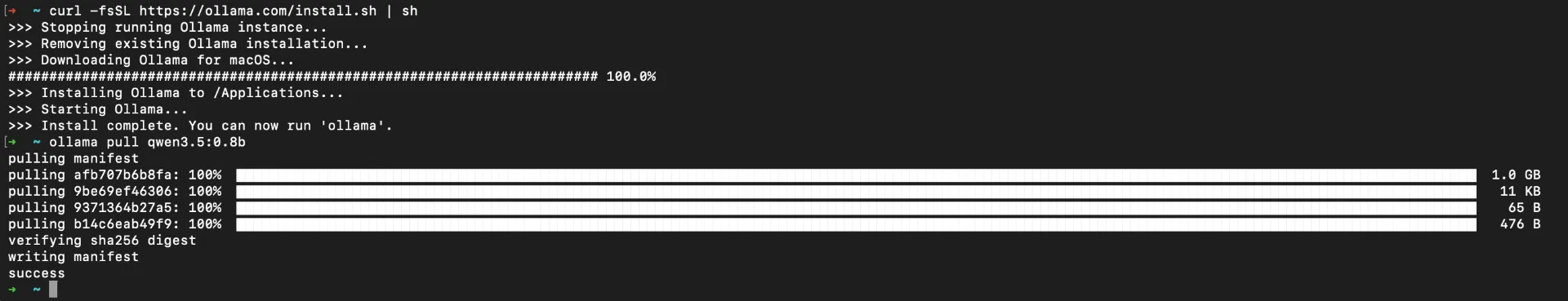

Easiest Setup: OpenCode + Ollama

If you’re using Ollama’s built-in Applications flow, the setup is even simpler. The current

Qwen 3.6 Ollama page lists a direct OpenCode launch command.

- Install Ollama

curl -fsSL https://ollama.com/install.sh | sh

- Install Opencode

curl -fsSL https://opencode.ai/install | bash

- Launch OpenCode directly through Ollama Applications

ollama launch opencode --model qwen3.6:35b-a3b

- Open your project and start working

Once OpenCode starts, point it at your repository and use it like any other terminal coding agent for explaining code, refactoring files, writing tests, or implementing features.

If you want a smaller local footprint, Ollama also provides smaller Qwen 3.6 variants (for example 27B-class options). Check the live Ollama model page for currently available tags.

Why This Setup Works Well

- Fastest setup path because Ollama can launch OpenCode directly as an application

- Runs fully local with no separate model gateway to configure

- Easy to scale up or down by swapping the Ollama model tag based on your hardware

How to Self-Host These Models Locally

Once you’ve picked a model, you need the right tools and hardware to run it. We’ve covered this extensively in our previous guides:

Quick Decision Guide

| Your Need | Recommended Model | Why |

|---|

| Best overall coding | Kimi K2.6 Thinking | Highest open-source mix in this snapshot: 78.57 Coding Avg and 58.33 Agentic Coding Avg |

| Best on consumer hardware | Qwen 3.6 27B or Devstral Small 2 | Solid coding scores with much smaller deployment footprint than trillion-scale models |

| Best tiny model (<10B) | Yi-Coder 9B or StarCoder2-3B | Runs on laptops, punches above weight |

| Best for agentic workflows | Kimi K2.6 or GLM-5.1 | Strong coding-agent benchmark claims and long-horizon multi-agent execution |

| Best for enterprise compliance | IBM Granite Code | Apache 2.0, ethics-vetted training data |

| Best efficiency per parameter | Qwen3.6-35B-A3B | Strong coding-agent scores from only 3B active parameters |

Conclusion

The open source LLM landscape for coding has matured dramatically, and the May 12, 2026 LiveBench snapshot tightened the race again. Kimi K2.6 Thinking currently leads this guide’s open-source set on both Coding (78.57) and Agentic Coding (58.33), with GLM 5.1 and DeepSeek V4 Pro close behind on agentic work.

For most developers, the practical recommendation is to start with Qwen 3.6 27B or Devstral Small 2 on local hardware, then move to Kimi K2.6, GLM 5.1, or DeepSeek V4 Pro if you need top-tier agentic performance and have enterprise GPUs. DeepSeek V3.2 remains a strong cost-to-quality baseline.

The 44% of organizations that cite data privacy as their top concern with LLM adoption now have no reason to hold back. Self-hosted open source models are production-ready for coding, and the gap with proprietary alternatives continues to shrink with each new release.